Skip Hovsmith

- Senior Consultant at Approov

Developer and Evangelist - Software Performance and API Security - Linux and Android Client and Microservice Platforms

Mobile apps commonly use APIs to interact with backend services and information. In 2016, time spent in mobile apps grew an impressive 69% year to year, reinforcing most companies' mobile-first strategies, while also providing fresh and attractive targets for cybercriminals. As an API provider, protecting your business assets against information scraping, malicious activity, and denial of service attacks is critical in maintaining a reputable brand and maximizing profits.

Properly used, API keys, tokens, and authorization play an important role in application security, efficiency, and usage tracking. Previously, In Part 1, we began discussing mobile security with a very simple example of API key usage and iteratively enhanced its API protection. In Part 2, we moved from keys to JWT tokens within several OAuth2 scenarios, eventually removing any user credentials and static secrets stored within the client. In Part 3, we discuss several attacks on OAuth2 authorization grant flows and common mitigations for them. We finish by extending the authorization mediator pattern introduced in Part 2 to offer best practice security, while adapting to less secure Oauth2 implementations.

We will use OAuth2 [https://oauth.net/2/] terminology whenever possible. For this article, a client is a mobile application. A resource owner is the application user, and a resource server is a backend server interacting with the client through API calls. An authorization server, if present, will authenticate a resource owner's credentials and authorize limited access to a resource server. A user agent, acting on behalf of an authorization server, will gather resource owner credentials separately from a client.

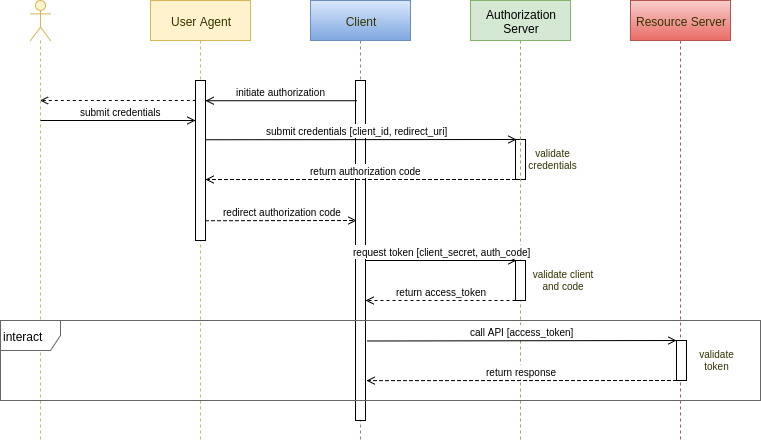

Here's the basic OAuth2 authorization grant flow:

Authorization is a two step process. In the first step, the client delegates to a user agent, usually a browser. Through the user agent, the user as resource owner approves the client and presents credentials to an authentication server. The server returns an authorization code which is redirected back to the client. In the second step, the client authenticates itself and presents the authorization code back to the authorization server which, if satisfied, returns an access token.

OAuth2 had a rather drawn out specification phase, and as a result, implementations vary between different service providers. Some optional features and newer extensions, which we'll describe below, have become important in preventing attacks which exploit the authorization grant flow.

For all scenarios, we assume that TLS techniques discussed in Part 1 are used to keep the communications channels secure.

OAuth2 distinguishes between public and confidential clients. A confidential client is able to protect a secret, while a public client cannot make that guarantee. The original OAuth2 spec, RFC6749, recommended that only confidential clients use the authorization grant flow, which uses a client secret for authentication, and allows the use of refresh tokens. Since public clients cannot protect a secret, they must use an implicit grant flow which does not authenticate the client nor allow refresh tokens.

OAuth2 considers mobile apps to be public clients. Despite RFC6749's recommendations, most authorization service providers elected to implement the authorization grant flow for their mobile apps, and recent IETF drafts for native OAuth2 clients now appear to require such an authorization grant flow. This provides the user convenience of refresh tokens but at greater risk unless an alternative to client secrets is used for client authentication.

Consider a straightforward authorization grant implementation. It relies on a client id, client secret, and, optionally, a fixed set of redirect URIs shared between client, authorization server, and resource server. As these are all static values within the client app, they cannot be considered secure. If an attacker reverse engineers these values, it is a simple matter to construct a fake app which looks both cosmetically genuine and which appears perfectly authentic to the OAuth2 authorization grant flow. As There's a Fake App for That suggests, there are plenty of these apps causing mischief in the app stores.

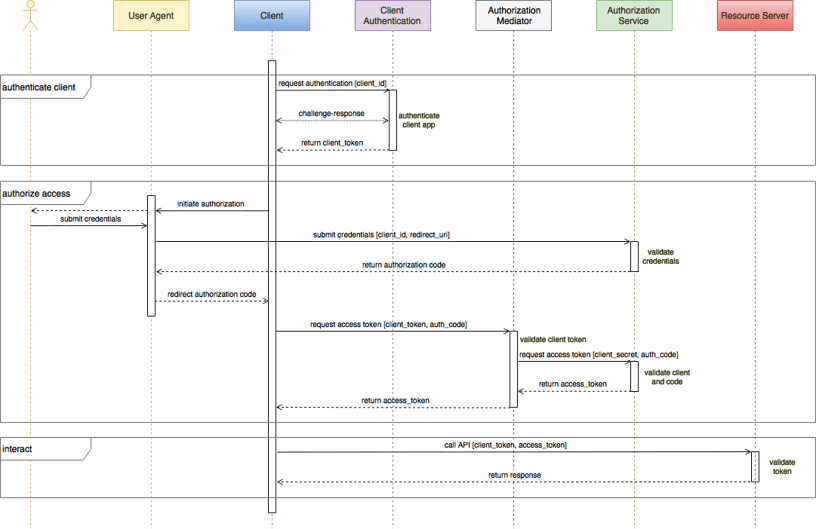

In Part 2, we discussed removing the client secret and replacing it with a dynamic attestation service.

In this flow, an authorization mediator checks the attestation service client integrity token for the authorization service. We will expand on this pattern later.

A dynamic attestation service, such as Approov, provides extremely reliable positive authentication of untampered apps. Being dynamic, this service authenticates the app during both phases of the authorization grant flow as well as frequently during authorized operation. As a result and without relying on client secrets, every authorized API call is made by an authentic app for an authorized user.

Several attacks on grant authorization involve observing the authorization code. One approach relies on modifying the redirect URI which is used to redirect the authorization code back through the user agent to the client. The modified URI returns the authorization code to a malicious client instead. Client administrators optionally register a whitelist of redirect URIs with the authorization service to prevent this.

With mobile apps, things are more complicated. Mobile app redirect URIs typically use a custom URI scheme. For example, the URI

com.example.awesome:/redirect/here

might redirect from a mobile browser to example.com's awesome application running on the same device. These schemes must be registered with the operating system, and multiple applications can register against the same scheme. So even though the URI is preregistered with the authorization server, there is no guarantee that the correct application will receive the redirection.

With optional or exploitable static secrets, a malicious app could successfully convert the redirected authorization code into access and refresh tokens and start accessing the resource server. Imagine launching a real banking app, granting your permissions, presenting login credentials, and then wondering why your app seems stuck. While you are waiting, your authorization code has been stolen, and a malicious app is making API calls to empty your bank account.

To mitigate authorization code interception scenarios, the Proof Key for Code Exchange (PKCE) extension was added as a requirement for OAuth2 public clients.

When using the PKCE extension, with each authorization request, the client creates a cryptographically random code verifier key. Two parameters, a code challenge and a code challenge method, are added to the initial client authorization request. For the "plain" method, the code challenge value is the same as the code verifier key value, and the code challenge method is simple comparison.

When receiving the request, the authorization server notes the code verifier challenge and method and returns the authorization code as usual.

To convert the received authorization code to a token, the client must present its original code verifier along with the authorization code. Using the code challenge method, comparison for the plain case, the server will validate the code challenge before returning valid access and refresh tokens.

So even if a malicious client observes or receives the authorization code, it will be unable to present the correct code verifier to the authorization server.

If the authorization server wishes to remain stateless, it is acceptable for the server to cryptographically encode the code challenge and code challenge method into the authorization code itself.

Though the plain code challenge method is the default, it is really only for backward compatibility with initial implementations. The plain method fails if the attacker can observe the initial client request, which includes the code verifier in the clear.

An alternative and preferred challenge method is "S256". With S256, the code challenge is a base 64 encoding of the SHA2 256 bit hash of the code verifier plain text.

Since the SHA2 transformation is practically irreversible, even if the code challenge is observed by the attacker, he cannot provide the original plain text code verifier required to obtain the access code.

If PKCE is not implemented, dynamic attestation provides pretty good defense against hijacked authorization codes. Using the dynamic attestation flow above, whichever app receives the authorization code must properly attest in order to convert the authorization code into an access token. Assuming that only one instance of the authentic app can be running on the device, then only that authentic app will be able to convert the authorization code successfully.

It is possible that the malicious app could store the hijacked code and try to use it later or even pass the authorization code off device. Since it can only convert the authorization code to an access token from an attested client, it must take control of that client somehow and is limited to normal API call sequences through that client. Authorization code expiration times, device fingerprinting, and replay defenses can be added to frustrate these attacks.

We introduced an authorization mediator in Part 2 to adapt the authorization grant flow to use a dynamic attestation service. This moved the vulnerable shared secret off the client and provided a reliable positive authentication approach without requiring any changes to the existing OAuth2 authorization service.

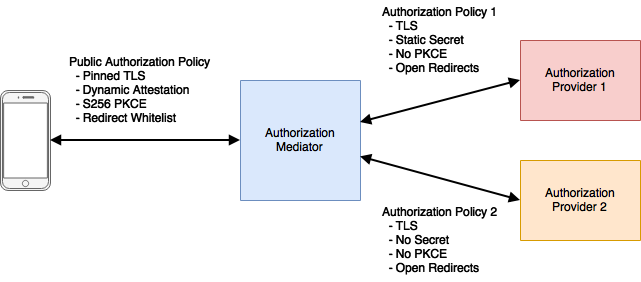

One of the challenges we alluded to earlier was the variety of inconsistent OAuth2 service implementations. Each service may ignore some required elements and/or require some optional ones. Implementations may also add some capabilities, like PKCE, over time.

If you want to directly interface with an authorization service, you are bound to whatever security policy it implements. As native apps are public, a "lite" implementation could be very insecure.

If you want to directly interface with multiple authorization services, then your client authorization library/SDK will grow and become difficult to manage. Any policy changes will require app updates.

Alternatively, if you use an authorization mediator, you can implement a single strong authorization policy between client and mediator, while the mediator itself can bridge to whatever policies are required by different OAuth2 authorization services. A consistent, best practice authorization policy is used by the public clients, where it is most needed, while the often weaker authorization provider policies are used in private behind the authorization mediator.

Service policy upgrades and additional service providers can be added at the mediator without requiring client upgrades.

If using a dynamic attestation service, the mediator could be used as a front end to both app authorization and resource servers. For resource servers, it would filter out any invalid client requests before they hit the backend resource servers. This mediator could be integrated with existing WAF or API gateway solutions.

Using this approach, we are able to protect every API call made to our resource servers validating both user and app authenticity, independent of the capabilities of the authorization service used.

In Part 1, we demonstrated use of client secrets and basic user authentication to protect API usage. In Part 2, we introduced JWT tokens and described several OAuth2 user authentication schemes. On mobile devices, static secrets are problematic, so we replaced static secrets with dynamic client authentication, again using JWT tokens. Combining both user and app authentication services provides a robust defense against API abuse. In Part 3, we discussed a few threat scenarios and extended the authorization mediator to provide strong authorization regardless of the strength of the supported OAuth2 providers.