Recently I was doing some API analysis on a video sharing app aimed at the teenage market. As is typical in these types of apps, before you can do anything you need to sign up with an account. You’d think that would be straightforward enough, right?

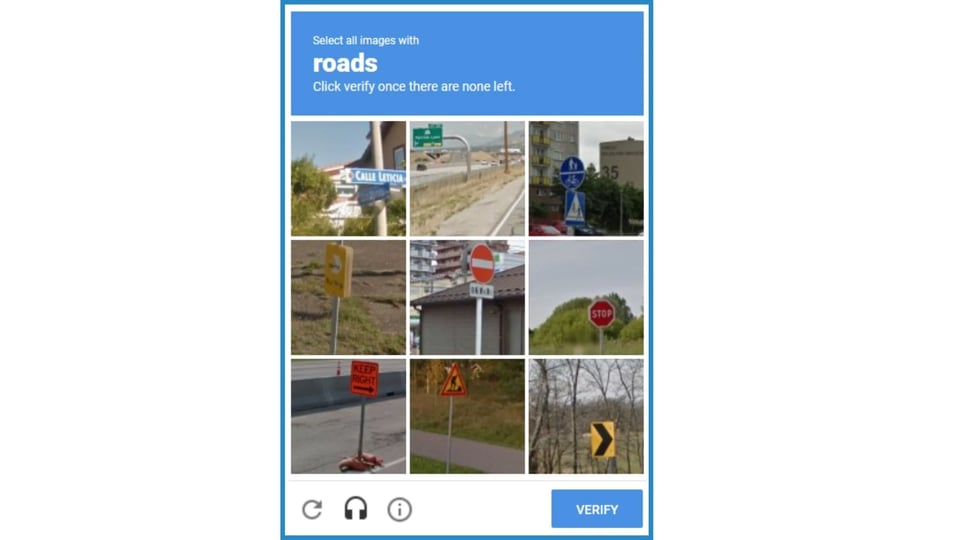

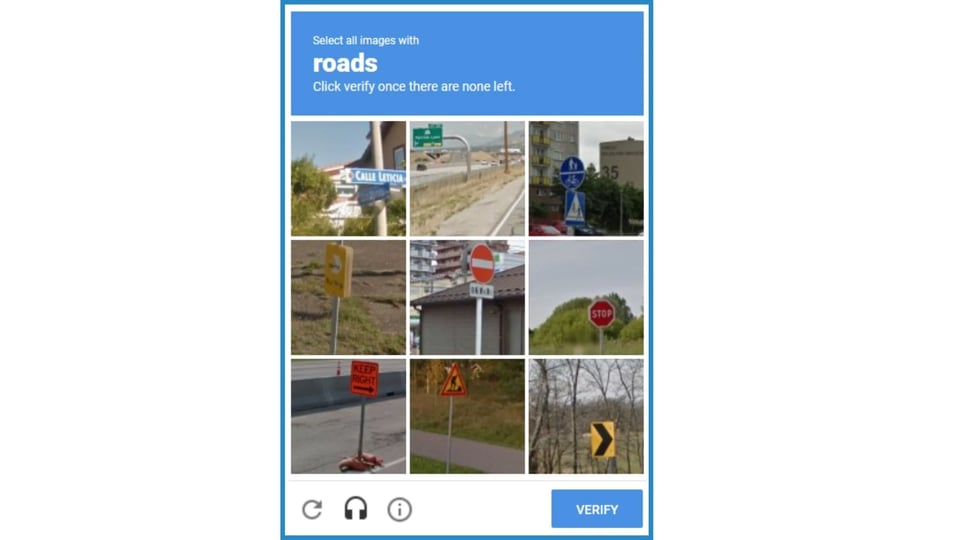

Click Verify Once There Are None Left

After entering the usual information, such as name and email address I was confronted with a Google Captcha. Normally these consist of a single page where you click the box saying you are not a Robot. Then you have to actually prove it by selecting some squares correctly. This wasn’t a normal Captcha however. With the subtext “Click Verify Once There Are None Left”, this one seemed to go on forever.

I was trapped in this particular circle of hell for somewhere close to 10 minutes, confronted with wave after wave of pictures with instructions to select the signs, shop fronts or roads. As each square is clicked a new one appears, seemingly more difficult than the last. When you're asked to select a sign does that include the pole? What if there is a tiny bit in the corner of a square - does that count? And what about roads, do I need to see the tarmac or is a road sign evidence enough of the presence of a road. Correct answers to these conundrums must be the key to my humanity, and it was being sorely tested. Maybe I was doing badly and this was why it was taking so long. I honestly didn’t know if I was going to get through it, or face the indignity of being declared robotic after all.

Disrupting the Economics of Fake Accounts

So why put potential users through all this pain. At this stage it is pure friction in the flow. I needed to do this just to get into the app to try it out. I could have easily given up or been rejected at this point, as I’m sure many have before me. The reason of course is this particular app is quite infamous for having a lot of non-human users. It is a social app that allows person to person messaging. That creates the perfect environment for spam bots to dwell. So I suspect that a lot of the would-be sign ups are from our robot cousins. Scripts designed to go through the steps of creating a new account, so they can get to their important business of sending spam, click bait and phishing attacks to unsuspecting real human users.

It is necessary to sign up new fake accounts in bulk so that the actions of any individual one in terms of the traffic it generates isn’t sufficient to trigger the behavioural controls in the system. If the bot does overstep the mark with its phishing and spam activities and gets banned then one of the regular stream of new robot recruits is ready to step in and take its place.

Simple Captchas are designed to perform bot mitigation and block any attempts at automating signups. The problem of course is that, like everything else, there is an API for that. There are services such as 2Captcha and AntiCaptcha that allow these to be solved, at a very low bulk price, by making the automated script call an API. The API passes the Captcha image and behind the service sits a human army of low paid workers ready to solve Captchas at a moment’s notice as they are fed them from the sign up scripts. Humans enslaved by the robots.

Solving Captchas then simply becomes a question of economics. Is the cost to solve the Captcha greater than the potential value of the fake account? If not then the next logical step is to make the Captcha longer and more complicated until the new price equilibrium is reached. I think this is what we are seeing here. Real humans caught in the crossfire as the fake account war rages, forced to solve ever longer and more tedious proofs of humanity.

The Impact on Signup Conversion

There is a not so hidden cost of course. That of the abandoned sign ups where potential customers simply give up and don’t bother with the app after all. Customers are notoriously fickle, especially before they have bought into using a particular app and it doesn’t take that much friction to put them off. This is a well known issue, especially in the world of web sites, and is discussed at length here. Depending on the complexity of the Captcha and type of app the impact could easily be several percentage points. However customers are not going to be impressed by a spam ridden platform either. That is also a sure way to lose customers. So how can a better trade off be made?

A New Factor in Blocking Fake Onboards and Automation

Automated bot scripts generally work by spoofing their requests as if they are coming from a real mobile app. The real app isn’t there though, just some code that fakes the required requests either to onboard a fake account and to subsequently send out the spam. Doing this manually using the real app simply isn’t economically practical.

So a much better way of blocking fake onboarding and automation scripts, also known as bot detection, is to make sure that it is actually the official app that is calling at your API backend. App legitimacy is what is needed here. In other words establishing the authenticity and integrity of the mobile app, without using embedded secrets stored in the app that can be reverse engineered and abused. Although it is conceivable that the real app actions could be automated using some kind of automation framework, this is considerably more difficult to achieve reliably. Moreover attempts to do this from an emulator are easily detected. Automation from a real device can also be detected and, in any case, needing real devices with real app instances to generate spam fundamentally undermines the economics of the fake bot accounts.

So with this new authentication factor in place we can return to a low friction sign up process, that keeps the bots out and maintains good customer conversion rates without needing that lengthy enquiry into our humanity.